- 联系我们

- duidaima.com 版权声明

- 闽ICP备2020021581号

-

闽公网安备 35020302035485号

闽公网安备 35020302035485号

闽公网安备 35020302035485号

闽公网安备 35020302035485号

在浏览器中,我们通常使用 audio 标签来播放音频:

<audio controls> <source src="myAudio.mp3" type="audio/mpeg"> <source src="myAudio.ogg" type="audio/ogg"> </audio>虽然 audio 标签使用起来很简单,但也存在一些局限。比如它只控制音频的播放、暂停、音量等。如果我们想进一步控制音频,比如通道合并和拆分、混响、音高和音频幅度压缩等。那么仅仅使用 audio 标签是做不到的。为了解决这个问题,我们需要使用 Web Audio API。

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>堆代码 duidaima.com</title>

</head>

<body>

<input id="audioFile" type="file" accept="audio/*"/>

<script>

const inputFile = document.querySelector("#audioFile");

inputFile.onchange = function(event) {

const file = event.target.files[0];

const reader = new FileReader();

reader.readAsArrayBuffer(file);

reader.onload = evt => {

const encodedBuffer = evt.currentTarget.result;

const context = new AudioContext();

context.decodeAudioData(encodedBuffer, decodedBuffer => {

const dataSource = context.createBufferSource();

dataSource.buffer = decodedBuffer;

dataSource.connect(context.destination);

dataSource.start();

})

}

}

</script>

</body>

</html>

在以上代码中,我们使用 FileReader API 来读取音频文件的数据。然后创建一个 AudioContext 对象并使用该对象上的 decodeAudioData 方法解码音频。获取到解码后的数据后,我们会继续创建一个 AudioBufferSourceNode 对象来存储解码后的音频数据,然后将 AudioBufferSourceNode 对象与 context.destination 对象连接起来,最后调用 start 方法播放音频。inputFile.onchange = function(event) {

const file = event.target.files[0];

const reader = new FileReader();

reader.readAsArrayBuffer(file);

reader.onload = evt=>{

const encodedBuffer = evt.currentTarget.result;

const context = new AudioContext();

context.decodeAudioData(encodedBuffer, decodedBuffer=>{

const dataSource = context.createBufferSource();

dataSource.buffer = decodedBuffer;

analyser = createAnalyser(context, dataSource);

bufferLength = analyser.frequencyBinCount;

frequencyData = new Uint8Array(bufferLength);

dataSource.start();

drawBar();

}

)

}

2、获取音频文件频率数据const analyser = audioCtx.createAnalyser(); analyser.fftSize = 512; const bufferLength = analyser.frequencyBinCount; const dataArray = new Uint8Array(bufferLength); analyser.getByteFrequencyData(dataArray);AnalyserNode 对象上的 getByteFrequencyData() 方法会将当前频率数据复制到传入的 Uint8Array 对象。

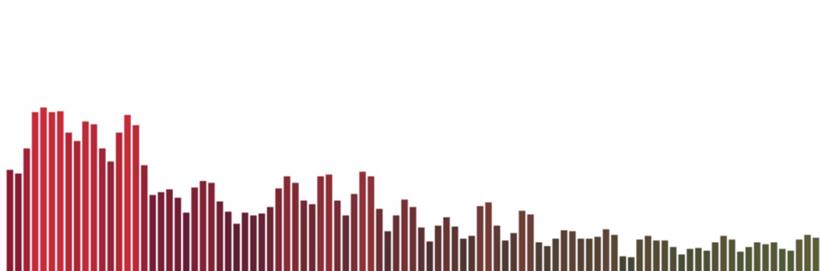

function drawBar() {

requestAnimationFrame(drawBar);

analyser.getByteFrequencyData(frequencyData);

canvasContext.clearRect(0, 0, canvasWidth, canvasHeight);

let barHeight, barWidth, r, g, b;

for (let i = 0, x = 0; i < bufferLength; i++) {

barHeight = frequencyData[i];

barWidth = canvasWidth / bufferLength * 2;

r = barHeight + 25 * (i / bufferLength);

g = 250 * (i / bufferLength);

b = 50;

canvasContext.fillStyle = "rgb(" + r + "," + g + "," + b + ")";

canvasContext.fillRect(x, canvasHeight - barHeight, barWidth, barHeight);

x += barWidth + 2;

}

}

分析完上面的处理流程,我们来看一下完整的代码:<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Visualizations with Web Audio API</title>

</head>

<body>

<input id="audioFile" type="file" accept="audio/*"/>

<canvas id="canvas"></canvas>

<script>

const canvas = document.querySelector("#canvas");

const inputFile = document.querySelector("#audioFile");

const canvasWidth = window.innerWidth;

const canvasHeight = window.innerHeight;

const canvasContext = canvas.getContext("2d");

canvas.width = canvasWidth;

canvas.height = canvasHeight;

let frequencyData = [], bufferLength = 0, analyser;

inputFile.onchange = function(event) {

const file = event.target.files[0];

const reader = new FileReader();

reader.readAsArrayBuffer(file);

reader.onload = evt=>{

const encodedBuffer = evt.currentTarget.result;

const context = new AudioContext();

context.decodeAudioData(encodedBuffer, decodedBuffer=>{

const dataSource = context.createBufferSource();

dataSource.buffer = decodedBuffer;

analyser = createAnalyser(context, dataSource);

bufferLength = analyser.frequencyBinCount;

frequencyData = new Uint8Array(bufferLength);

dataSource.start();

drawBar();

}

)

}

function createAnalyser(context, dataSource) {

const analyser = context.createAnalyser();

analyser.fftSize = 512;

dataSource.connect(analyser);

analyser.connect(context.destination);

return analyser;

}

function drawBar() {

requestAnimationFrame(drawBar);

analyser.getByteFrequencyData(frequencyData);

canvasContext.clearRect(0, 0, canvasWidth, canvasHeight);

let barHeight, barWidth, r, g, b;

for (let i = 0, x = 0; i < bufferLength; i++) {

barHeight = frequencyData[i];

barWidth = canvasWidth / bufferLength * 2;

r = barHeight + 25 * (i / bufferLength);

g = 250 * (i / bufferLength);

b = 50;

canvasContext.fillStyle = "rgb(" + r + "," + g + "," + b + ")";

canvasContext.fillRect(x, canvasHeight - barHeight, barWidth, barHeight);

x += barWidth + 2;

}

}

}

</script>

</body>

</html>

浏览器打开包含上述代码的网页,然后选择一个音频文件后,你就可以看到类似的图形。

以上图形是使用 Github 上的第三方库 vudio.js 生成的。如果你有其它很酷的音频可视化效果,欢迎给我留言哈。

参考:

GitHub官网地址:https://github.com/alex2wong/vudio