- 联系我们

- duidaima.com 版权声明

- 闽ICP备2020021581号

-

闽公网安备 35020302035485号

闽公网安备 35020302035485号

闽公网安备 35020302035485号

闽公网安备 35020302035485号

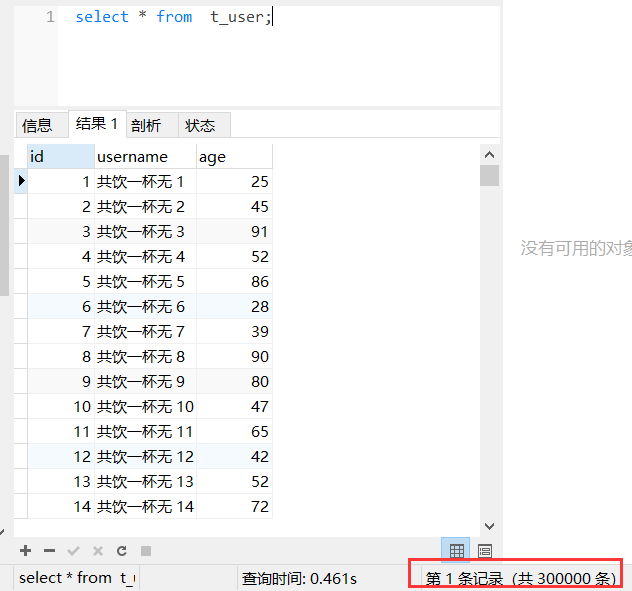

CREATE TABLE `t_user` ( `id` int(11) NOT NULL AUTO_INCREMENT COMMENT '用户id', `username` varchar(64) DEFAULT NULL COMMENT '用户名称', `age` int(4) DEFAULT NULL COMMENT '年龄', PRIMARY KEY (`id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COMMENT='用户信息表';话不多说,开整!

/**

* <p>用户实体</p>

*

* @Author zjq

* @Date 2021/8/3

*/

@Data

public class User {

private int id;

private String username;

private int age;

}

mapper接口public interface UserMapper {

/**

* 批量插入用户

* @param userList

*/

void batchInsertUser(@Param("list") List<User> userList);

}

mapper.xml文件 <!-- 批量插入用户信息 -->

<insert id="batchInsertUser" parameterType="java.util.List">

insert into t_user(username,age) values

<foreach collection="list" item="item" index="index" separator=",">

(

#{item.username},

#{item.age}

)

</foreach>

</insert>

jdbc.propertiesjdbc.driver=com.mysql.jdbc.Driver jdbc.url=jdbc:mysql://localhost:3306/test jdbc.username=root jdbc.password=rootsqlMapConfig.xml

<?xml version="1.0" encoding="UTF-8" ?>

<!DOCTYPE configuration PUBLIC "-//mybatis.org//DTD Config 3.0//EN" "http://mybatis.org/dtd/mybatis-3-config.dtd">

<configuration>

<!--通过properties标签加载外部properties文件-->

<properties resource="jdbc.properties"></properties>

<!--自定义别名-->

<typeAliases>

<typeAlias type="com.zjq.domain.User" alias="user"></typeAlias>

</typeAliases>

<!--数据源环境-->

<environments default="developement">

<environment id="developement">

<transactionManager type="JDBC"></transactionManager>

<dataSource type="POOLED">

<property name="driver" value="${jdbc.driver}"/>

<property name="url" value="${jdbc.url}"/>

<property name="username" value="${jdbc.username}"/>

<property name="password" value="${jdbc.password}"/>

</dataSource>

</environment>

</environments>

<!--加载映射文件-->

<mappers>

<mapper resource="com/zjq/mapper/UserMapper.xml"></mapper>

</mappers>

</configuration>

不分批次直接梭哈 @Test

public void testBatchInsertUser() throws IOException {

InputStream resourceAsStream =

Resources.getResourceAsStream("sqlMapConfig.xml");

SqlSessionFactory sqlSessionFactory = new SqlSessionFactoryBuilder().build(resourceAsStream);

SqlSession session = sqlSessionFactory.openSession();

System.out.println("===== 开始插入数据 =====");

long startTime = System.currentTimeMillis();

try {

List<User> userList = new ArrayList<>();

for (int i = 1; i <= 300000; i++) {

User user = new User();

user.setId(i);

user.setUsername("共饮一杯无 " + i);

user.setAge((int) (Math.random() * 100));

userList.add(user); // 堆代码 duidaima.com

}

session.insert("batchInsertUser", userList); // 最后插入剩余的数据

session.commit();

long spendTime = System.currentTimeMillis()-startTime;

System.out.println("成功插入 30 万条数据,耗时:"+spendTime+"毫秒");

} finally {

session.close();

}

}

可以看到控制台输出:Cause: com.mysql.jdbc.PacketTooBigException: Packet for query is too large (27759038 >yun 4194304). You can change this value on the server by setting the max_allowed_packet’ variable.超出最大数据包限制了,可以通过调整max_allowed_packet限制来提高可以传输的内容,不过由于30万条数据超出太多,这个不可取,梭哈看来是不行了。既然梭哈不行那我们就一条一条循环着插入行不行呢?

/**

* 新增单个用户

* @param user

*/

void insertUser(User user);

<!-- 新增用户信息 -->

<insert id="insertUser" parameterType="user">

insert into t_user(username,age) values

(

#{username},

#{age}

)

</insert>

调整执行代码如下: @Test

public void testCirculateInsertUser() throws IOException {

InputStream resourceAsStream =

Resources.getResourceAsStream("sqlMapConfig.xml");

SqlSessionFactory sqlSessionFactory = new SqlSessionFactoryBuilder().build(resourceAsStream);

SqlSession session = sqlSessionFactory.openSession();

System.out.println("===== 开始插入数据 =====");

long startTime = System.currentTimeMillis();

try {

for (int i = 1; i <= 300000; i++) {

User user = new User();

user.setId(i);

user.setUsername("共饮一杯无 " + i);

user.setAge((int) (Math.random() * 100));

// 一条一条新增

session.insert("insertUser", user);

session.commit();

}

long spendTime = System.currentTimeMillis()-startTime;

System.out.println("成功插入 30 万条数据,耗时:"+spendTime+"毫秒");

} finally {

session.close();

}

}

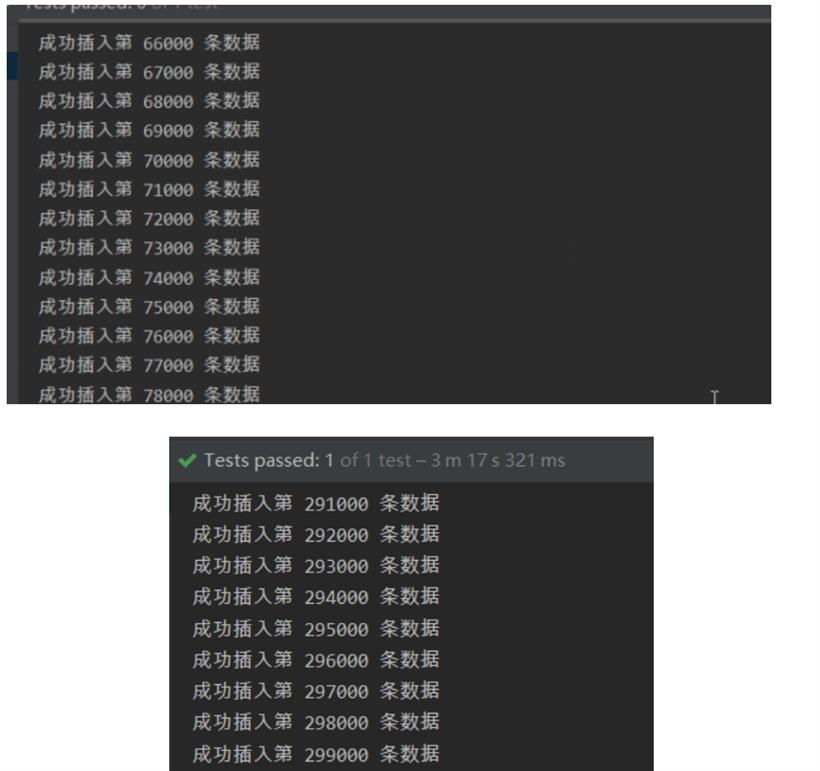

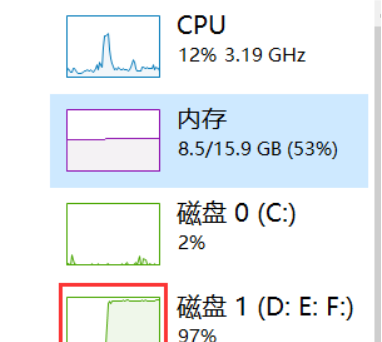

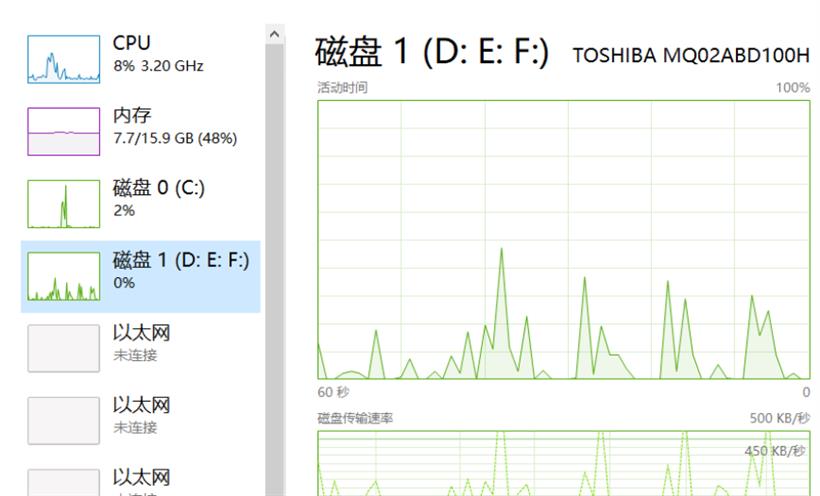

执行后可以发现磁盘IO占比飙升,一直处于高位。

-- 清空用户表 TRUNCATE table t_user;以下是通过 MyBatis 实现 30 万条数据插入代码实现:

/**

* 分批次批量插入

* @throws IOException

*/

@Test

public void testBatchInsertUser() throws IOException {

InputStream resourceAsStream =

Resources.getResourceAsStream("sqlMapConfig.xml");

SqlSessionFactory sqlSessionFactory = new SqlSessionFactoryBuilder().build(resourceAsStream);

SqlSession session = sqlSessionFactory.openSession();

System.out.println("===== 开始插入数据 =====");

long startTime = System.currentTimeMillis();

int waitTime = 10;

try {

List<User> userList = new ArrayList<>();

for (int i = 1; i <= 300000; i++) {

User user = new User();

user.setId(i);

user.setUsername("共饮一杯无 " + i);

user.setAge((int) (Math.random() * 100));

userList.add(user);

if (i % 1000 == 0) {

session.insert("batchInsertUser", userList);

// 每 1000 条数据提交一次事务

session.commit();

userList.clear();

// 等待一段时间

Thread.sleep(waitTime * 1000);

}

}

// 最后插入剩余的数据

if(!CollectionUtils.isEmpty(userList)) {

session.insert("batchInsertUser", userList);

session.commit();

}

long spendTime = System.currentTimeMillis()-startTime;

System.out.println("成功插入 30 万条数据,耗时:"+spendTime+"毫秒");

} catch (Exception e) {

e.printStackTrace();

} finally {

session.close();

}

}

使用了 MyBatis 的批处理操作,将每 1000 条数据放在一个批次中插入,能够较为有效地提高插入速度。同时请注意在循环插入时要带有合适的等待时间和批处理大小,以防止出现内存占用过高等问题。此外,还需要在配置文件中设置合理的连接池和数据库的参数,以获得更好的性能。

/**

* 分批次批量插入

* @throws IOException

*/

@Test

public void testBatchInsertUser() throws IOException {

InputStream resourceAsStream =

Resources.getResourceAsStream("sqlMapConfig.xml");

SqlSessionFactory sqlSessionFactory = new SqlSessionFactoryBuilder().build(resourceAsStream);

SqlSession session = sqlSessionFactory.openSession();

System.out.println("===== 开始插入数据 =====");

long startTime = System.currentTimeMillis();

int waitTime = 10;

try {

List<User> userList = new ArrayList<>();

for (int i = 1; i <= 300000; i++) {

User user = new User();

user.setId(i);

user.setUsername("共饮一杯无 " + i);

user.setAge((int) (Math.random() * 100));

userList.add(user);

if (i % 1000 == 0) {

session.insert("batchInsertUser", userList);

// 每 1000 条数据提交一次事务

session.commit();

userList.clear();

}

}

// 最后插入剩余的数据

if(!CollectionUtils.isEmpty(userList)) {

session.insert("batchInsertUser", userList);

session.commit();

}

long spendTime = System.currentTimeMillis()-startTime;

System.out.println("成功插入 30 万条数据,耗时:"+spendTime+"毫秒");

} catch (Exception e) {

e.printStackTrace();

} finally {

session.close();

}

}

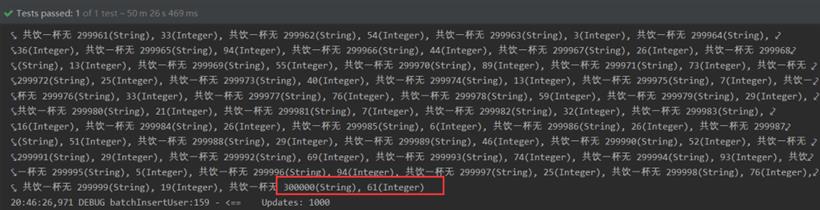

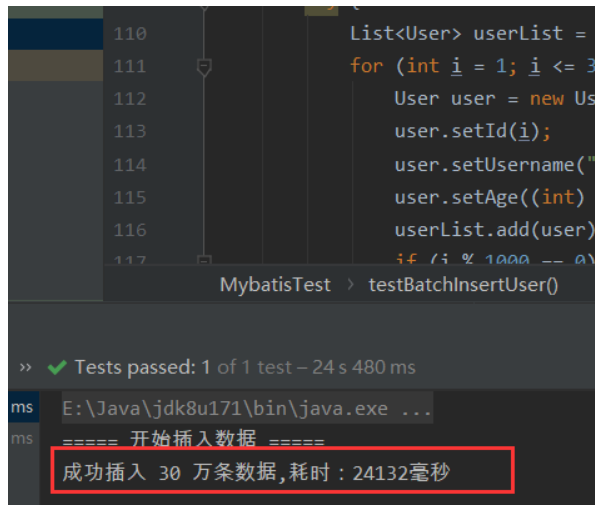

则24秒可以完成数据插入操作:

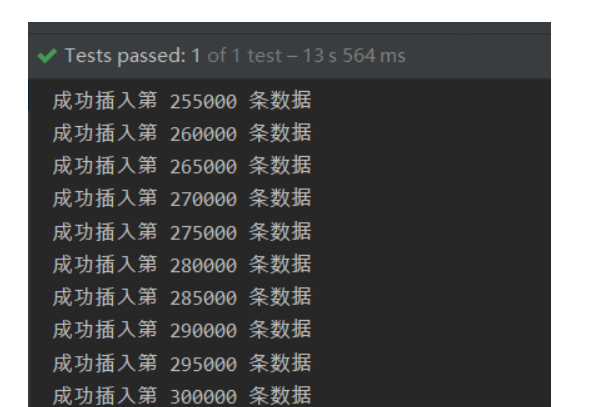

/**

* JDBC分批次批量插入

* @throws IOException

*/

@Test

public void testJDBCBatchInsertUser() throws IOException {

Connection connection = null;

PreparedStatement preparedStatement = null;

String databaseURL = "jdbc:mysql://localhost:3306/test";

String user = "root";

String password = "root";

try {

connection = DriverManager.getConnection(databaseURL, user, password);

// 关闭自动提交事务,改为手动提交

connection.setAutoCommit(false);

System.out.println("===== 开始插入数据 =====");

long startTime = System.currentTimeMillis();

String sqlInsert = "INSERT INTO t_user ( username, age) VALUES ( ?, ?)";

preparedStatement = connection.prepareStatement(sqlInsert);

Random random = new Random();

for (int i = 1; i <= 300000; i++) {

preparedStatement.setString(1, "共饮一杯无 " + i);

preparedStatement.setInt(2, random.nextInt(100));

// 添加到批处理中

preparedStatement.addBatch();

if (i % 1000 == 0) {

// 每1000条数据提交一次

preparedStatement.executeBatch();

connection.commit();

System.out.println("成功插入第 "+ i+" 条数据");

}

}

// 处理剩余的数据

preparedStatement.executeBatch();

connection.commit();

long spendTime = System.currentTimeMillis()-startTime;

System.out.println("成功插入 30 万条数据,耗时:"+spendTime+"毫秒");

} catch (SQLException e) {

System.out.println("Error: " + e.getMessage());

} finally {

if (preparedStatement != null) {

try {

preparedStatement.close();

} catch (SQLException e) {

e.printStackTrace();

}

}

if (connection != null) {

try {

connection.close();

} catch (SQLException e) {

e.printStackTrace();

}

}

}

}